Why most AI testing tools aren't actually autonomous

Your QA team is exploring AI-driven testing. You’re seeing “AI test automation” everywhere. But here’s the problem: most of these tools still require humans to write test cases.

I’ve been watching this play out across engineering teams for months now. 72.3% of QA teams are adopting AI testing according to the TestGuild Automation Testing Survey 2024. That’s one of the fastest adoption curves in automation testing history. But when you look closer at what these teams do, most still have QA engineers write test specs by hand.

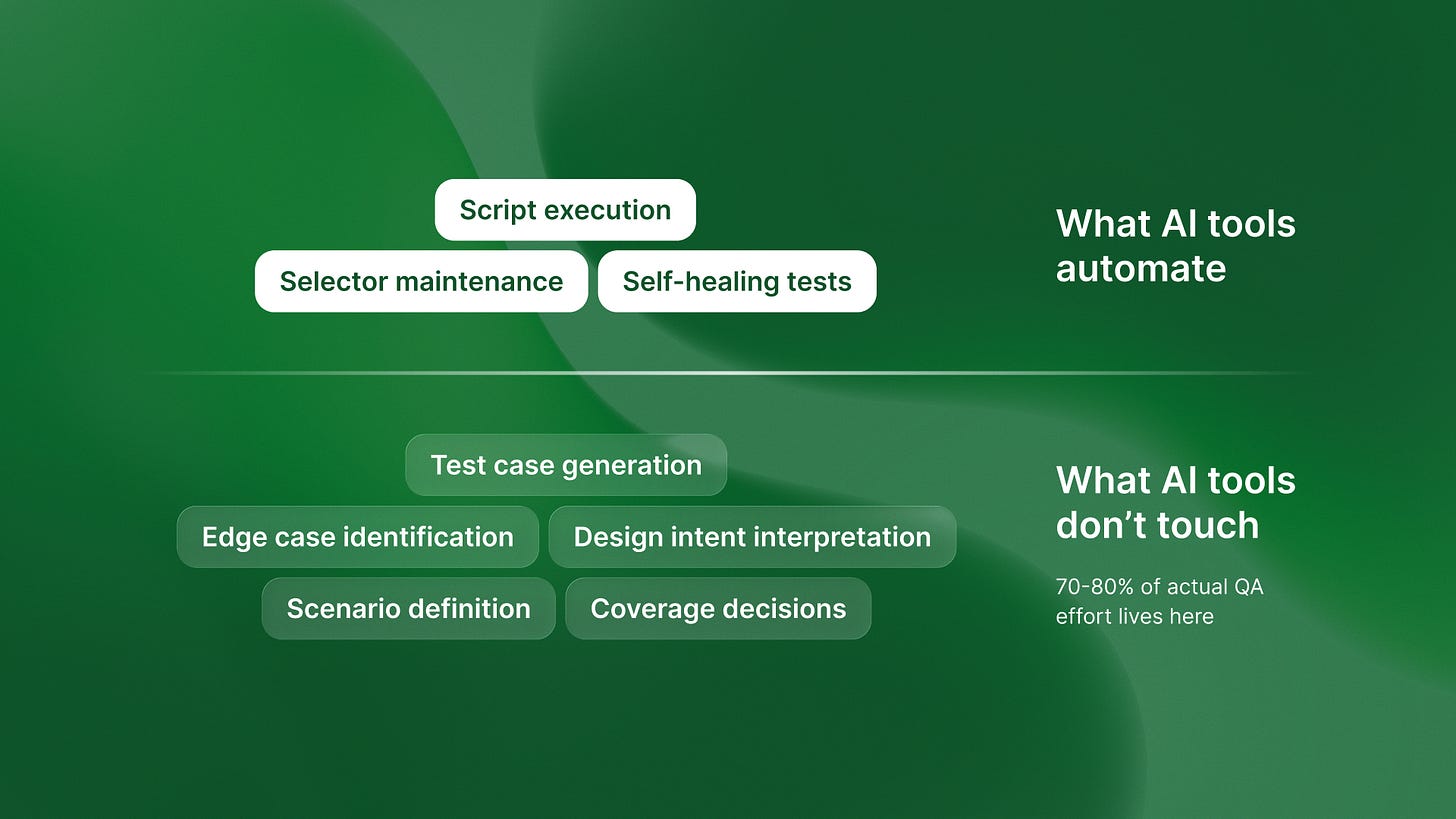

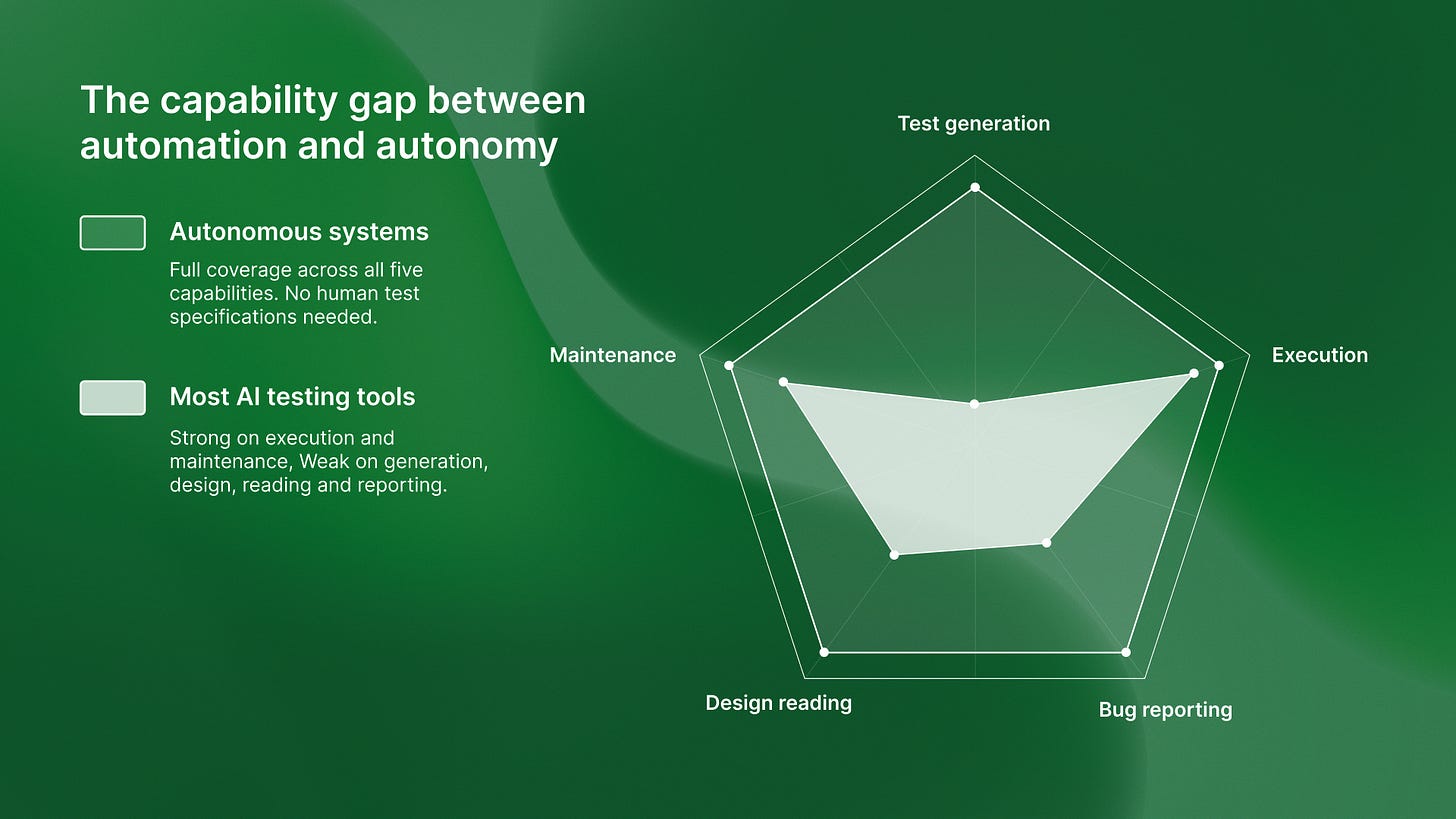

The industry has conflated two fundamentally different things: automated execution and autonomous generation. One makes your existing process faster. The other eliminates the bottleneck entirely.

Here’s why the distinction matters.

What most “AI Test Automation” actually does

Let’s start with what you’re probably seeing in the market right now.

Most “AI test automation” tools are adding intelligence to existing frameworks. They write smarter selectors in Selenium. They self-heal broken tests in Cypress. They suggest test improvements in Playwright. Some use GPT to generate test scripts from natural language descriptions.

The workflow looks like this: Human writes test specification, AI tool generates script, AI executes and maintains script.

Notice who’s still in that loop. The human.

You still need a QA engineer to write “test login with a wrong password” or “check that the cart updates when quantity changes.” The AI just converts that specification into executable code faster than a human could hand-write it.

This is automated execution with AI assistance. It’s not autonomous generation.

Last week I was talking to the team at Islands, and they showed me something revealing. They manage dev hours across 8-15 simultaneous client projects. When they evaluated AI testing tools for their clients, every single one still required test case definitions. The AI would generate the Playwright code. It would maintain the selectors. But someone still had to specify what to test.

The scaling constraint remained.

The autonomous testing architecture

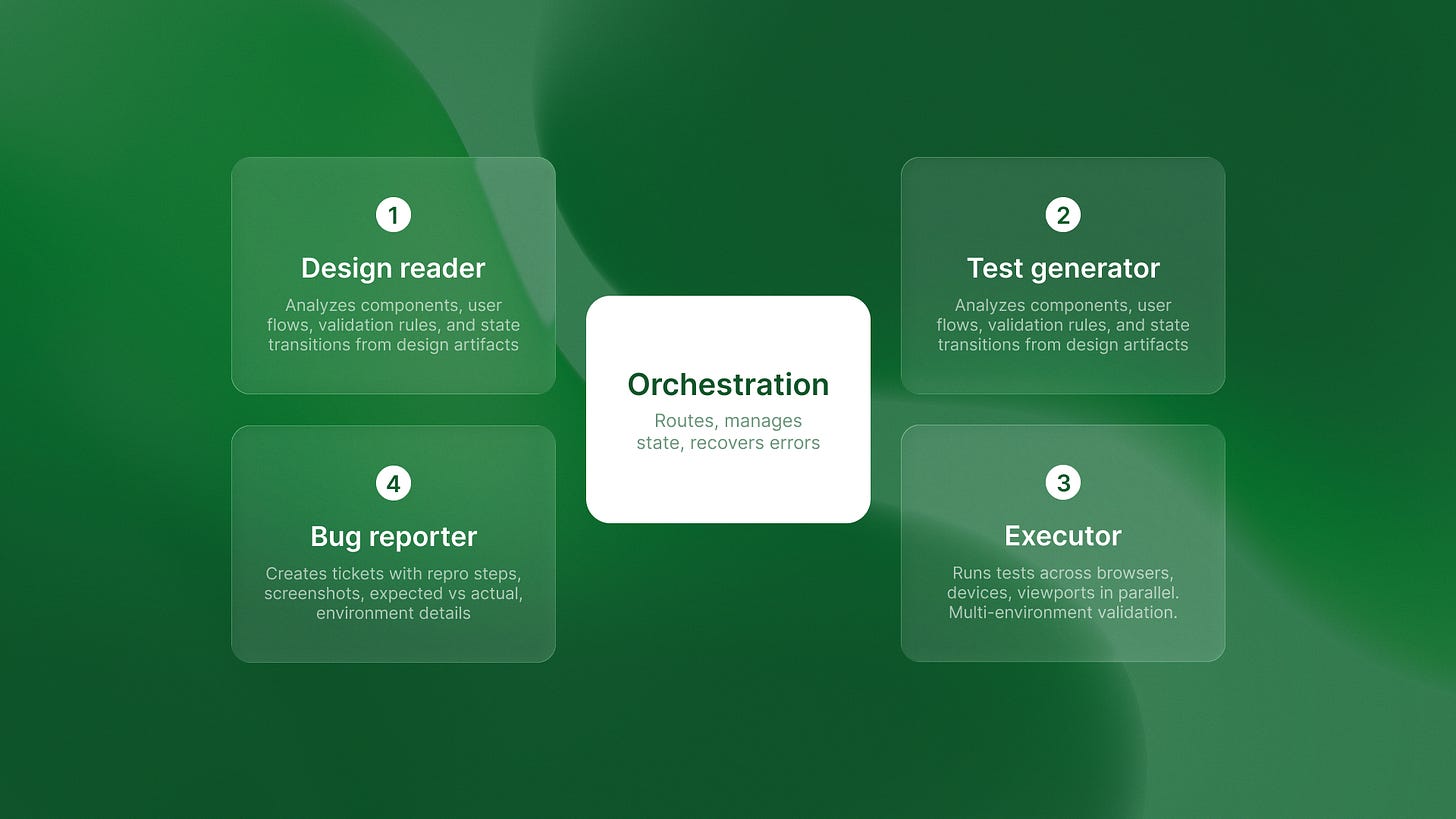

True autonomous testing works differently. The architecture is multi-agent, and the workflow is generation, execution, reporting.

Here’s what that means in practice.

Agent 1 reads design artifacts. It looks at your Figma file or GitHub commits. It understands the design intent.

Agent 2 generates test scenarios from that intent. Not from human specifications. From the design itself. If Figma shows a login screen with email and password fields, the system assumes a test for an invalid email format. No one needs to write it.

Agent 3 executes those tests across environments.

Agent 4 creates production-ready bug tickets.

The human never defines the test. The system reads design intent and independently generates the test suite.

This is what Gartner is forecasting when they say AI agents will independently handle 40% of QA workloads by 2026. Not just execution. Generation.

I noticed this distinction clearly when looking at QA flow. The platform generates tests directly from Figma designs. You connect your design file. The system analyzes the UI. It creates test scenarios without test case templates. That’s autonomous generation, not AI-enhanced scripting.

The difference is architectural. One system assists human test writers. The other replaces the need for human test writing entirely.

Why the Distinction Matters for Engineering Velocity

Let’s talk about bottleneck math.

Traditional automation speeds up test execution. If you can run 1,000 tests in parallel instead of sequentially, you save time. But you still needed someone to write those 1,000 tests.

AI-enhanced automation makes writing tests faster. GPT can convert “test login” into Playwright code in seconds. But you still need QA engineers proportional to your development velocity. More features means more test specifications to write.

Autonomous generation breaks this constraint.

If the system generates tests from design artifacts, QA effort becomes constant rather than linear. Your team ships 10 features or 100 features. The system reads the Figma files and generates the tests either way.

The ThinkSys QA Trends Report says 80% of software teams will adopt AI-driven testing by 2025-2026. That’s good. But if those teams buy automated execution thinking it’s autonomous generation, they won’t solve their scaling problem.

They’ll just execute the wrong tests faster.

I saw this at Timecapsule recently. They track dev hours in real-time with profitability monitoring. When they reviewed testing tools, the math was clear: AI-assisted Selenium could speed up test maintenance by about 30%. But they’d still need manual test case definition for every new feature. Autonomous generation would eliminate that manual step entirely. The ROI calculation wasn’t even close.

How to Evaluate “Autonomous” Claims

Here’s how to cut through the marketing when evaluating AI testing tools.

Ask: Does the system require humans to write test specifications?

If yes, it’s automated execution, not autonomous generation. It doesn’t matter how smart the script generation is. The bottleneck remains.

Ask: Can the system generate tests from design artifacts without test case templates?

If no, it’s AI-assisted scripting, not autonomy. You’re still defining what to test. The AI is just helping you write it.

Ask: Does the system handle generation AND execution AND reporting autonomously?

Partial autonomy doesn’t eliminate bottlenecks. If the system generates tests but requires manual bug triage, you’ve moved the bottleneck, not removed it.

Autonomous means independent decision-making across the full workflow. Not just intelligent assistance at one step.

Some testing will always require human judgment. Exploratory testing. UX validation. Domain-specific edge cases. That’s fine. The question is whether the system can handle the repetitive regression testing that currently consumes 70-80% of QA effort.

If it can’t generate those tests from design artifacts, it’s not autonomous.

The Real Promise of Autonomous Testing

The future of QA isn’t about running tests faster. It’s about not writing them at all.

72.3% adoption shows the demand is real. Teams know AI will transform testing. But they need to understand what they’re actually adopting.

Automated execution makes your existing process more efficient. You still write test cases. You still maintain test suites. You just do it faster.

Autonomous generation eliminates the need for human-defined test cases entirely. The system reads design intent and generates tests independently.

One approach optimizes the bottleneck. The other removes it.

When you’re evaluating AI testing tools, ask which problem they’re solving. Most are making test execution smarter. A few are making test generation autonomous.

The architectural difference matters. Because autonomous testing isn’t about running tests faster. It’s about not writing them at all.